citiesabc, first_page

Demystifying AI Series 1 : The Pattern Recognition Paradox:

18 Feb 2026

The Pattern Recognition Paradox: Why AI Sees Everything and Understands Nothing

From the book "Demystifying AI" by Dinis Guarda

"The measure of intelligence is the ability to change." — Albert Einstein

"We must address, individually and collectively, moral and ethical issues raised by cutting-edge research in artificial intelligence and biotechnology." — Klaus Schwab

At its core, artificial intelligence is mathematical pattern matching on an extraordinary scale. MIT's Computer Science and Artificial Intelligence Laboratory describes it as “high-dimensional statistical inference”, essentially, very sophisticated curve-fitting across millions of variables. This description is precise, not dismissive. And for cities, economies, and the institutions shaping urban life, understanding it clearly is the foundation for understanding both AI's remarkable power and its equally remarkable limitations.

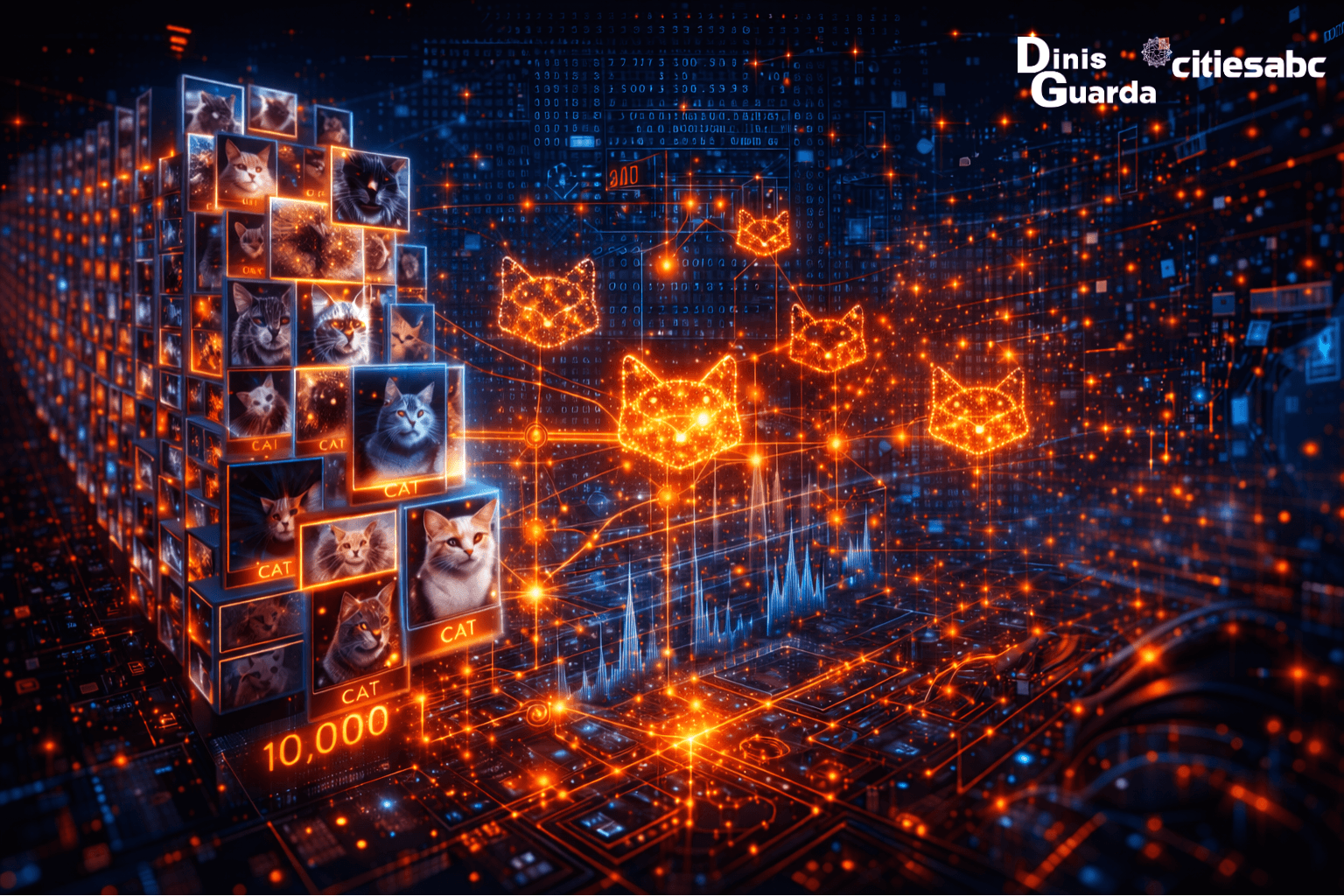

Here is what actually happens: you show an AI system ten thousand images labelled "cat." The algorithm adjusts internal weights, numbers, until it finds mathematical patterns that correlate with "cat-ness": pointy ears, whiskers, certain colour distributions. But the system doesn't know what a cat is. It has never felt fur, doesn't understand predator-prey relationships, has no concept of domestication or Egyptian worship. It has found correlations. It has not gained comprehension.

Oxford's Future of Humanity Institute frames this as a crucial distinction: AI has capability without comprehension. A language model can write a sonnet about heartbreak whilst having never experienced a heartbeat. For cities embedding AI into healthcare systems, financial infrastructure, public safety, and urban planning, this paradox is not abstract. It is the operational reality that every decision-maker must understand.

The Business and Urban Reality of Pattern Matching

This paradox doesn't stay theoretical. It plays out with real consequences across the industries and institutions already deploying AI at scale, many of them central to how modern cities function.

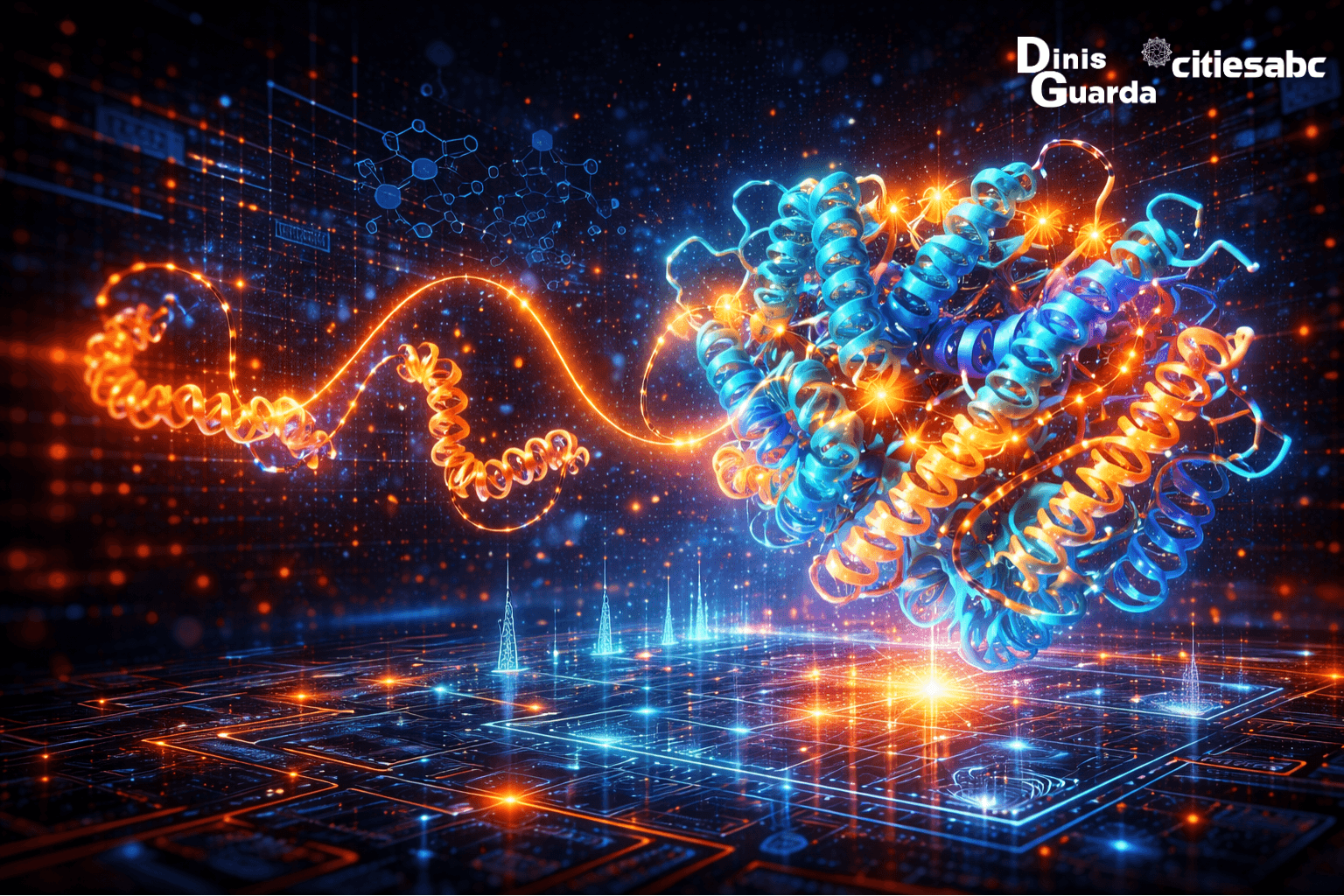

In healthcare, DeepMind's AlphaFold solved protein folding, a fifty-year biological puzzle, opening paths to disease treatment. Yet the system couldn't explain why proteins fold the way they do, only that they do. For city health systems integrating AI into diagnostics and early detection, the capability is extraordinary. The comprehension remains absent. These are not the same thing, and collapsing the distinction leads to misplaced trust in high-stakes environments.

In finance, JP Morgan's COIN system reviews twelve thousand commercial credit agreements in seconds, work that previously required an estimated three hundred and sixty thousand lawyer hours annually. But it cannot judge whether a contract is fair, only whether it matches learned patterns. For the financial systems underpinning urban economies, speed and scale do not substitute for judgment.

In autonomous vehicles, among the most visible AI applications reshaping city mobility, Tesla's AI processes sensor data a thousand times per second. But a child holding a stop sign at an unusual angle may go unrecognised, because the pattern doesn't match training data. The system sees; it does not understand what it is seeing. In urban environments, where unpredictability is constant, this distinction carries direct public safety implications.

Each of these cases illustrates the same underlying structure: extraordinary performance within the learned pattern space, and brittleness the moment reality steps outside it.

Where the Paradox Becomes Dangerous

The gap between capability and comprehension creates predictable failures, and they tend to appear precisely where the stakes are highest, in the systems cities use to govern, protect, and serve their populations.

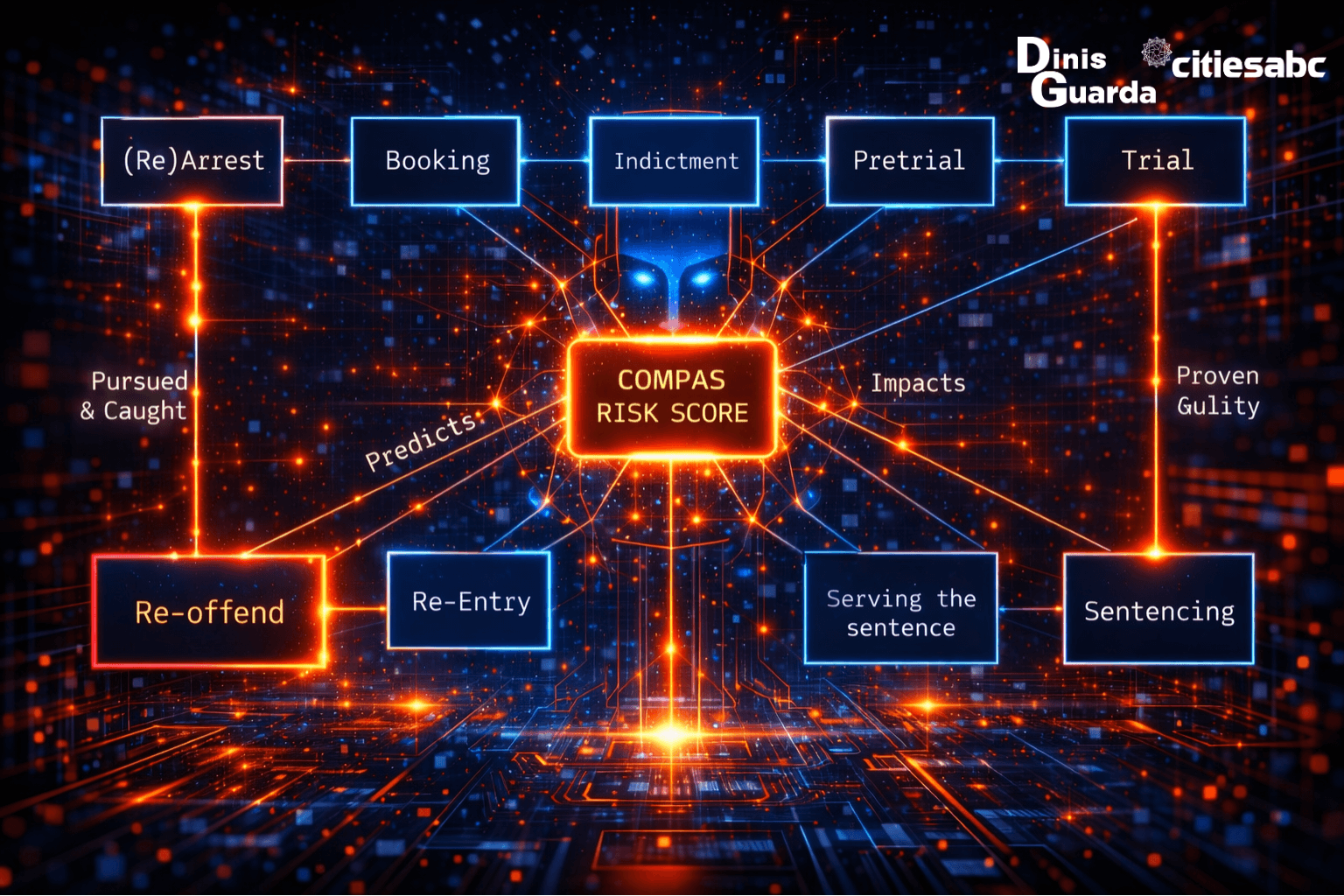

In criminal justice, the COMPAS algorithm recommended harsher sentences for Black defendants due to historical data patterns, perpetuating systemic bias. The algorithm didn't include race as a variable, it didn't need to. It used proxies: neighbourhood, employment history, social networks, all of which correlated strongly with racial demographics because the historical data itself reflected decades of unequal treatment. Cities deploying predictive tools in policing and sentencing face the same structural risk: the system finds real patterns, the patterns encode injustice, and it reports both with identical confidence.

In medical diagnosis, an AI trained predominantly on European datasets misidentified melanoma in darker skin tones at double the error rate. For city health systems serving diverse urban populations, this is not a peripheral concern. The system wasn't malfunctioning, it was doing exactly what it was built to do. The training data didn't represent the full range of human skin. The system couldn't know what it hadn't seen.

The system had no mechanism to distinguish between categories requiring contextual understanding rather than visual correlation. For cities navigating questions of cultural representation and digital public life, the lesson is direct: pattern recognition operates without the cultural literacy that urban governance demands.

What unites these failures is not technical incompetence. It is the structural absence of comprehension. The system cannot recognise the limits of its own pattern space. It delivers outputs with uniform confidence whether it is right or catastrophically wrong.

The Philosophical Crack

Here is where the paradox opens into something deeper and where it becomes most relevant to how cities think about the role of AI in public life.

If AI can perform tasks once considered to require intelligence, diagnosing disease, drafting legal documents, translating languages, without understanding any of them, what does that reveal about intelligence itself?

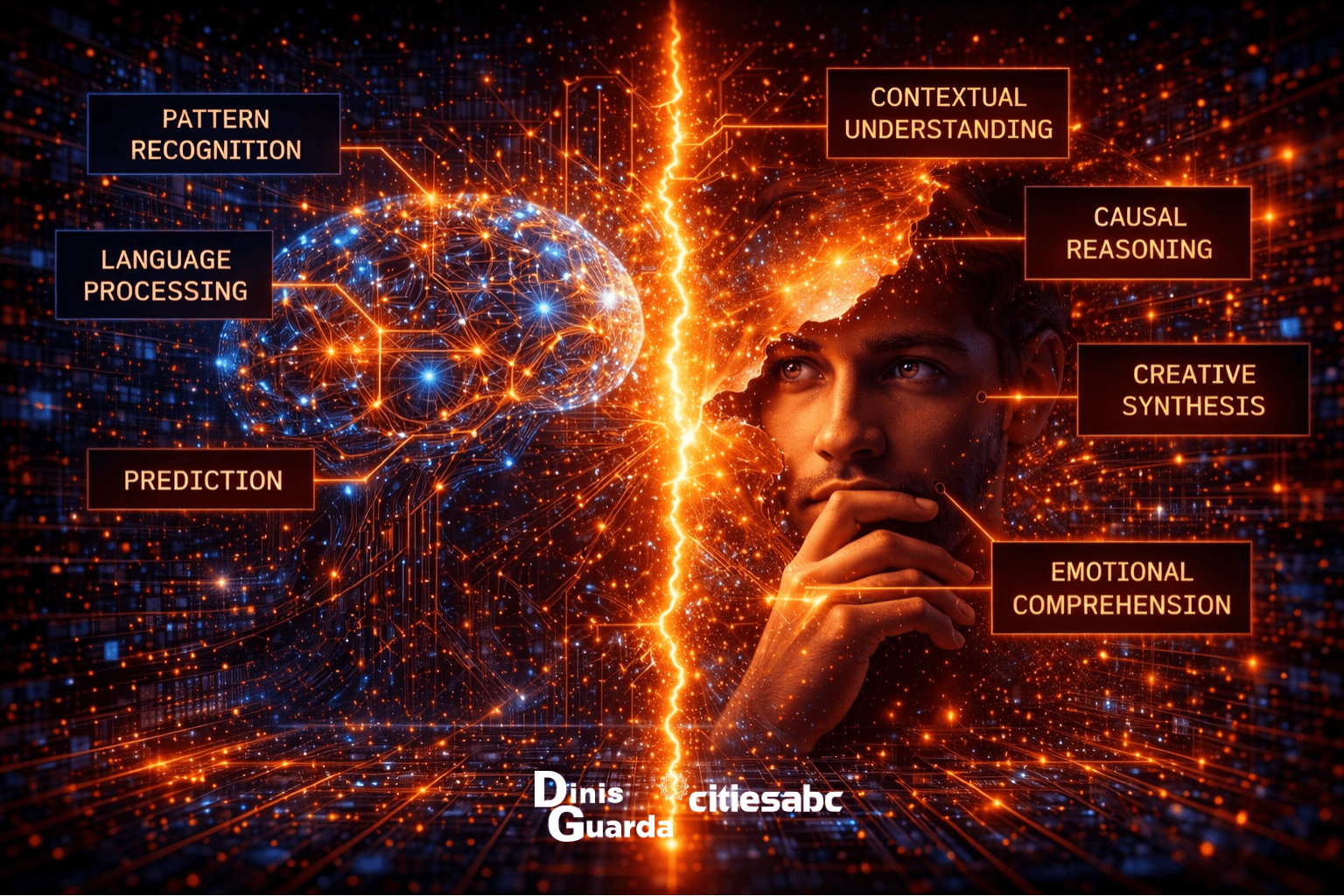

Cambridge's Leverhulme Centre for the Future of Intelligence suggests we are witnessing the "unbundling of intelligence." For most of human history, we experienced intelligence as unified: pattern recognition, contextual understanding, causal reasoning, emotional comprehension, and creative synthesis all arrived together, housed in the same human mind. AI makes the distinctions between them visible by excelling dramatically at some while remaining entirely absent from others.

Perhaps intelligence was never the monolithic thing we assumed. Perhaps we bundled these capacities together simply because humans happen to do all of them. AI separates the bundle and in doing so, forces a reckoning with which capacities are genuinely irreplaceable and which are more mechanical than we cared to admit.

For cities, this unbundling has immediate practical meaning. Urban governance requires pattern recognition, identifying trends in mobility data, energy consumption, public health indicators. But it also requires contextual judgment, ethical reasoning, democratic accountability, and cultural sensitivity. These are not the same capabilities. Treating AI as though it provides the latter because it excels at the former is where urban AI deployments go wrong.

The Risk Horizon

When systems see without understanding, the risks don't remain confined to individual errors. They scale and in cities, they scale across entire populations.

A biased human official might make a prejudiced decision ten times a day. A biased algorithm makes the same prejudiced decision ten thousand times an hour, and because it is mathematics, the discrimination becomes invisible, unquestionable, and immune to the ordinary social and democratic pressures that might otherwise correct it. Pattern matching doesn't experience conscience. It doesn't notice when it has crossed a line.

The confidence problem compounds this. AI outputs arrive formatted as authoritative answers. They are fluent, specific, and consistent. They don't hedge the way a thoughtful human expert would. Users, including expert users, tend to accept them, particularly in domains where the underlying process is opaque. This is what Carnegie Mellon's Human-Computer Interaction Institute identifies as authority bias: not that AI is persuasive, but that the form of AI output mimics the form of expert judgment, without the substance behind it. For city administrators, policymakers, and urban planners working with AI-generated analysis, this bias is a live operational risk.

The Augmentation Opportunity

The paradox resolves when we position AI as a perceptual tool rather than a decision-maker. Think of it as an electron microscope for patterns, it shows you things invisible to the naked eye, but a human must interpret what they mean. For cities, this reframing is not merely conceptual. It is the difference between AI that serves urban populations and AI that governs them without accountability.

Carnegie Mellon's Human-Computer Interaction Institute offers a framework that reflects this clearly:

AI proposes; humans dispose. The system flags anomalies. The exper, the urban planner, the public health official, the policy maker, investigates. Every AI output carries confidence scoring: uncertainty measures, not guarantees. Systems must show which patterns led to which conclusions. Critical decisions always route through human judgment. And algorithmic outputs are subject to continuous audit for pattern degradation and bias drift.

This isn't a compromise between AI capability and human caution. It is the accurate description of what each contributes. AI detects correlations across vast urban datasets at speeds and scales no human can match. Humans bring causation, context, and meaning, the three things AI structurally cannot provide, and the three things that democratic urban governance cannot function without.

The cities and organisations that deploy AI most effectively are those that resist the anthropomorphism that leads decision-makers to treat it as a colleague with judgment and experience. It is a tool of extraordinary power, operating on principles fundamentally unlike human cognition. Treating it as more than that doesn't unlock more value, it imports the risks without the safeguards.

The Path Forward

AI's pattern recognition paradox isn't a flaw awaiting a fix. It is a fundamental characteristic to understand. The technology excels at correlation detection across vast datasets. It fails at causation, context, and meaning. This makes it an extraordinary tool and a dangerous oracle—depending entirely on whether the humans and institutions deploying it understand the difference.

The question for cities isn't whether AI will transform urban systems. It already has. The question is whether the people governing those systems will develop the wisdom to deploy these powerful, limited tools appropriately, understanding that every resident, every community, every neighbourhood brings irreplaceable contextual intelligence that no pattern-matching algorithm can replicate.

AlphaFold solved protein folding. It did not understand life. That distinction is not a reason to dismiss the achievement. It is a reason to understand precisely what the achievement was, and to remain clear-eyed about the distance between statistical correlation and genuine comprehension.

That distance is where human intelligence still lives. And in cities, it is where democratic accountability must remain.

In the next article, we examine what happens when pattern-completion goes wrong in ways the system cannot detect: the phenomenon of AI hallucination, and what it means for law, medicine, education, and public trust.

Questions for Reflection

In your organisation or city, where are you using pattern recognition—appropriate for AI versus contextual judgment, which requires human intelligence?

Can you identify three instances where AI confidence in urban decision-making might be high but understanding low?

How would city governance change if policymakers viewed AI outputs as "expert pattern detection" rather than "intelligent recommendations"?

What human capabilities become more valuable in urban institutions precisely because AI excels at pattern-matching?

If intelligence can be "unbundled," which types of intelligence define the irreplaceable human contribution to city governance?